One of the services I provide is converting neural networks to run on iOS devices.

Because neural networks by nature perform a lot of computations, it is important that they run as efficiently as possible on mobile. An efficient model is able to get real-time results on live video — without draining the battery or making the phone so hot you can fry an egg on it.

Traditional neural networks such as VGGNet and ResNet are too demanding, and I usually recommend switching to MobileNet. With this architecture, even models with over 200 layers can run at 30 FPS on older iPhones and iPads.

I’ve created a source code library for iOS and macOS with fast implementations of MobileNet V1 and V2, as well as SSDLite and DeepLabv3+.

This library makes it very easy to add MobileNet-based neural networks into your apps, for tasks such as:

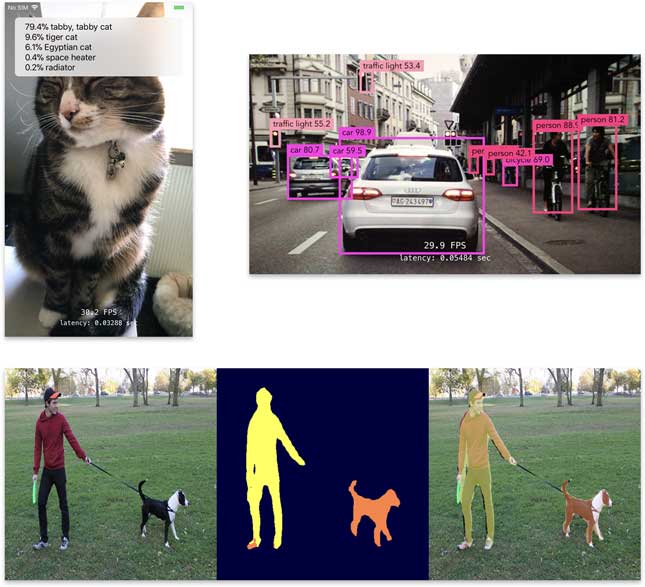

- image classification

- real-time object detection

- semantic image segmentation

- as a feature extractor that is part of a custom model

It’s common for modern neural networks to have a base network, or “backbone”, with additional layers on top for performing a specific task. MobileNet is a great backbone. I’ve helped clients implement real-time object tracking and human pose recognition models on top of the base MobileNet layers with great success.

This is a library of proven, battle-tested code that runs in apps that are currently on the App Store.

Here’s a video (YouTube link) recorded from an iPhone 7 running MobileNetV2 + SSDLite:

To make this video, I simply pointed the phone at a YouTube video playing on my Mac and recorded the iPhone’s screen with Quicktime Player. The camera runs at 30 FPS and the neural network can easily keep up, even on this older phone. (This version of SSDLite was trained on COCO. To use this in your app, you’d typically re-train it on your own dataset.)

Why MobileNet?

Many research papers present neural network architectures that are not suitable for use on mobile devices. Often a big model such as VGGNet is used as a feature extractor and new functionality is added on top.

The problem with architectures such as VGGNet, ResNet50, and Inception is that they have tens of millions of parameters and require billions of calculations for a single pass through the network. The models in research papers are often trained on clusters of very powerful GPUs. iPhones and iPads simply don’t have that kind of computing power.

The MobileNet architecture is designed to run efficiently on mobile devices. It uses “only” up to 4 million parameters, which is a lot less than VGG’s 130M parameters and ResNet50’s 25M parameters. It also does way fewer calculations: 300 MFLOPs versus 4 GFLOPs or more for these larger models. MobileNet is similar in accuracy to VGGNet, so it’s a good replacement for it.

If you’re thinking about creating a neural network architecture for use on mobile devices, or you’re converting an architecture from a research paper, consider using MobileNet as the base network that provides extracted features to the rest of your model.

For more details on exactly how MobileNet works, see the following blog posts:

Models included in the library

The source code library includes fast implementations of the following models:

- MobileNet V1:

- feature extractor

- classifier

- object detection with SSD

- MobileNet V2:

- feature extractor

- classifier

- object detection with SSD or SSDLite

- DeepLab v3+ for semantic segmentation

The classifier models can be adapted to any dataset. If you’re using any of the popular training scripts then making your model work with this library is only a matter of running a conversion script.

Example of how to use the MobileNet V2 classifier:

let classifier = MobileNetV2Classifier(commandQueue: commandQueue,

parameterLoader: ParameterLoader(...),

preprocessor: PreprocessorImageNet(...),

inputWidth: 224,

inputHeight: 224,

depthMultiplier: 1,

outputType: .softmax,

classes: 100,

inflightBuffers: 2)

let runner = Runner(commandQueue: commandQueue, inflightBuffers: 2)

let image = ... // the input image

runner.predict(network: classifier, inputImage: image,

orientation: .up, queue: .main) { result in

print(result.predictions)

}

That’s even less code than you need for using a Core ML model. 😉

The library supports all the common iOS image formats:

UIImage,CGImage,CIImageCMSampleBufferandCVPixelBuffer- Metal textures (RGB, BGR, YCbCr)

The classifier uses MobileNet as a feature extractor and adds a classification layer on top. The library also makes it easy to integrate the feature extractor with other models than classifiers. Here’s an example of how to use MobileNet V1 as the base network as part of a larger model:

featureExtractor = MobileNetV1FeatureExtractor(commandQueue: commandQueue,

parameterLoader: loader,

preprocessor: preprocessor,

inputWidth: 224,

inputHeight: 224,

depthMultiplier: 1,

pooling: .none,

extractLayers: [ .pointwise11, .pointwise13 ],

inflightBuffers: 2)

// when encoding the GPU commands:

featureExtractor.encode(commandBuffer: commandBuffer,

image: image,

orientation: orientation,

inflightIndex: inflightIndex)

let outputs = featureExtractor.outputImages(inflightIndex: inflightIndex)

let output_pointwise11 = outputs[0]

let output_pointwise13 = outputs[1]

// use these outputs as the input for additional layers

You can specify from which layers you want to extract the feature maps, and use these outputs as the inputs to the additional layers of your model. That is exactly what happens in an advanced model such as SSDLite.

Performance measurements

This section shows several metrics on the performance of the included MobileNet models. There are two major factors that affect the performance:

- The “depth multiplier”. This hyperparameter lets you balance the trade-off between model size, inference speed, and accuracy. Models with a smaller depth multiplier perform fewer computations and are therefore faster, but also less accurate. The below measurements are for a standard model with depth multiplier = 1.0.

- The size of the input images. Since it’s a fully-convolutional network, MobileNet accepts input images of any size. However, using large images is much slower than using smaller images. The measurements are done with standard images of 224×224 pixels.

Speed (frames per second)

The following table shows the maximum FPS (frames-per-second) for the classifier models running inference on a sequence of 224×224 images:

| Version | iPhone 7 | iPhone X | iPad Pro 10.5 | iPhone XS |

|---|---|---|---|---|

| MobileNet V1 | 118 | 162 | 204 | 230 |

| MobileNet V2 | 145 | 233 | 220 | 360 |

Note: The time for resizing the images from their original size to 224×224 is not included in these measurements. The tests used triple-buffering to obtain maximum throughput. The classifiers are trained on the ImageNet dataset and output predictions for 1000 categories.

The FPS results for object detection are:

| Version | iPhone 7 | iPhone X | iPad Pro 10.5 | iPhone XS |

|---|---|---|---|---|

| MobileNet V1 + SSD | 53 | 74 | 82 | 96 |

| MobileNet V2 + SSDLite | 63 | 98 | 108 | 145 |

Note: The object detection tests were performed on a 300×300 image. No postprocessing (non-maximum suppression) was applied, so these scores only measure the raw time it takes to run the neural network. The SSD models are trained on the COCO dataset.

The FPS results for semantic segmentation are:

| Version | iPhone 7 | iPhone X | iPad Pro 10.5 | iPhone XS |

|---|---|---|---|---|

| DeepLabv3+ | 8.2 | 12.5 | 15.1 | 16 |

Note: The segmentation model takes a 513x513 image as input and produces a 513x513 segmentation mask. This model was trained on the Pascal VOC dataset with 20 classes. As you can see, segmentation is a lot slower than the other tasks!

Accuracy

The next table shows the accuracy for the classifiers on the ImageNet test set:

| Version | Top-1 Accuracy | Top-5 Accuracy |

|---|---|---|

| MobileNet V1 | 70.9 | 89.9 |

| MobileNet V2 | 71.8 | 91.0 |

Note: This is the accuracy from the original TensorFlow models, not the converted Metal models. (I will update this table soon with the results from running the Metal models on the ImageNet validation set.)

Size and computation

The next table shows the size of the classifier models and how many multiply-accumulate operations they perform for inference on a single 224×224 image:

| Version | MACs (millions) | Parameters (millions) |

|---|---|---|

| MobileNet V1 | 569 | 4.24 |

| MobileNet V2 | 300 | 3.47 |

The library stores the weights as 16-bit floating point numbers. Including MobileNet V2 into your app adds approximately 7 MB to your app bundle.

Why not Core ML or TensorFlow Lite?

Core ML is great, I’m a fan. If you trained your model using Keras, Caffe, or MXNet it’s really easy to convert the model to a Core ML file and embed it in your app. TF Lite is a good choice if you trained your model using TensorFlow.

These options are (fairly) convenient but unfortunately they are not as efficient as they could be. TensorFlow is not currently GPU-accelerated and Core ML tends to be slower than a hand-optimized Metal model.

The following table compares the maximum FPS of the Core ML and Metal versions of MobileNet V1:

| iPhone 7 | iPhone X | iPad Pro 10.5 | |

|---|---|---|---|

| Core ML | 45 | 53 | 110 |

| Core ML 2 | - | - | 120 |

| Metal | 118 | 162 | 204 |

| Speed difference | 2.6× | 3× | 1.8x |

Note: Core ML and Metal tested on iOS 11.2 and 11.3. Core ML 2 tested with iOS 12.0.

Yes, I couldn’t believe it either: Core ML really is that much slower. To measure the speed of the Core ML model, I used a 224×224 CVPixelBuffer as input, with triple buffering. I also tested using the model through the Vision framework but that was generally slower than using Core ML directly.

The same comparison for MobileNet V2:

| iPhone 7 | iPhone X | iPad Pro 10.5 | |

|---|---|---|---|

| Core ML | 41 | 50 | - |

| Core ML 2 | - | - | 84 |

| Metal | 145 | 233 | 220 |

| Speed difference | 3.5× | 4.6× | 2.6× |

For some reason MobileNet V2 runs slower than V1 with Core ML. The V2 model has fewer parameters but more layers, which may be why Core ML is slower.

Another downside of using Core ML is that it’s less flexible than Metal. Core ML only supports a limited number of model and layer types. If you’re doing cutting-edge work with new layers or activation functions, chances are Core ML can’t help you. While it’s possible now to create custom Core ML layers, I find it easier to just implement the entire model with Metal.

I recommend using Core ML for quickly iterating on your model, but for the final version that goes into your app, nothing beats the raw power of Metal code.

Isn’t Core ML super fast on the iPhone XS?

Yes, it is! Thanks to the A12’s amazing Neural Engine, Core ML achieves almost unbelievable speeds. MobileNet V1 and MobileNet V2 easily run at over 240 FPS — and if you really push it you can get them up to 600 FPS!

If your app is going to primarily support the iPhone XS, and you’re OK with much worse performance on previous iPhone models, then Core ML is the best choice. But if you still need to support iPhone 7, 8, or X, then my Metal-based library will give better performance than Core ML on those devices.

The Neural Engine also uses different trade-offs than the GPU. Even though some models run much faster on the Neural Engine, it turns out MobileNetV2+SSDLite is actually slower on the Neural Engine than on the GPU. ¯\_(ツ)_/¯

Note: Core ML uses the Neural Engine to get these amazing speeds, but it’s unlikely your model can benefit from this if it requires custom layers. There is currently no way for developers to use the Neural Engine in their models, and so Core ML will need to run at least part of your model on the CPU or GPU. You’ll only be able to benefit from the speed of the Neural Engine if your model only uses the layer types that are supported by Core ML, and if its architecture is compatible with the design trade-offs of the Neural Engine.

What do you get?

With this library you get the full Swift source code for MobileNet V1 and V2, as well as SSD, SSDLite, and DeepLabv3+.

The code is written using the Metal and Metal Performance Shaders frameworks to make optimal use of the GPU.

Also included are:

- Conversion scripts. These scripts read trained models from TensorFlow, Keras, Caffe etc. and convert the weights so they can be loaded into the Metal versions of the models.

- Convenient helper classes that make it easy to put the models into your own apps and interpret their predictions.

- Pre-trained models to quickly get started.

- Documentation of how to use the API.

- Example apps. These apps show how to use the models with live video from the iPhone camera, the photo library, ARKit, and so on.

The library is compatible with iOS 11, and runs on devices with an A8 processor or better (iPhone 6 and up).

Note: Due to iOS limitations, the GPU cannot be used while the app is in the background. If your app needs to run a neural network while the app is backgrounded, you cannot use this library. In that case, using Core ML or TF Lite is a better choice. Alternatively, if Core ML or TensorFlow are not suitable solutions, I can convert your model to use highly optimized CPU routines, to squeeze out the maximum speed possible.

Pricing

Price: send me an email

That probably makes this library sound more expensive than it really is. 🤑

The reason I’m reluctant to mention a price here is that usually a certain amount of consulting is needed to fit this code to your needs. You’re welcome to license the library as-is, but I’d rather customize the code to your specific requirements as that makes for better apps and happier clients.

What sort of custom work is typical?

- converting the weights from your training package to Metal, especially if your model uses a slightly different architecture or you trained on a dataset that has special considerations

- you’re using MobileNet as a feature extractor in a larger network and also need to convert the rest of the layers

- your model requires custom GPU kernels

- debugging: small differences in implementations may cause problems if you don’t know what to look for — for example, the Keras version of MobileNet recently changed how the convolution layers were zero-padded, a small detail that can throw off the accuracy of your model if you’re not aware of it

- seamless integration of the model into your app

Get in touch to give your mobile app the power of state-of-the-art neural networks!