This post explains how you can take advantage of Swift’s type system to make your programs more expressive and robust.

For the last week or so I’ve been playing with machine learning algorithms in Swift. You can often implement these algorithms really succinctly and efficiently by using matrices.

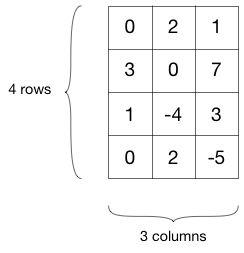

In case you forgot your linear algebra, just think of a matrix as a table of numbers.

When we say, “M is a 4-by-3 matrix”, we mean that M is a table of 4 rows and 3 columns. For the purposes of this article, that’s all you really need to know about matrices.

This is an example of a 4×3 matrix:

So I wrote some code and created a struct Matrix:

struct Matrix {

let rows: Int

let columns: Int

...

}

One thing you often need to do with matrices is multiply them, and I created a function for that:

func multiply(m1: Matrix, _ m2: Matrix) -> Matrix {

// bunch of math...

}

This may all seem very straightforward but there’s something here that bothers me.

Even though m1 and m2 are both Matrix objects, they may actually have different numbers of rows and columns. And that could be a problem.

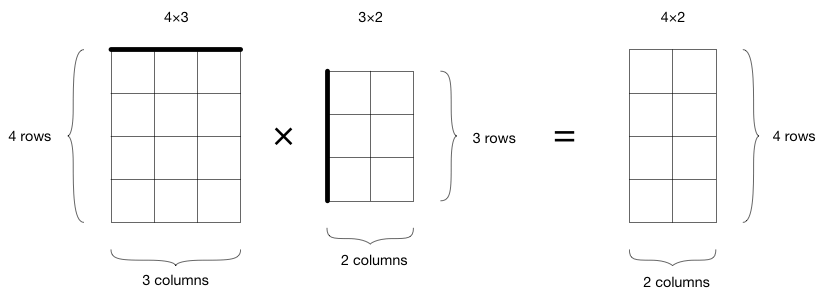

For example, with matrix multiplication the sizes of the two matrices have to match up in a particular way:

The number of columns in the first matrix has to be the same as the number of rows in the second matrix. If the size of the first matrix is U × V, then the size of the second matrix has to be V × W. That’s how the math works.

The result is a new matrix of size U × W. If the sizes of the matrices don’t match up in this particular way, we can’t multiply them.

For example, the following will work fine:

let A = Matrix(rows: 4, columns: 3)

let B = Matrix(rows: 3, columns: 2)

let C = multiply(A, B) // gives a 4×2 matrix

Note: In math, matrices are often denoted with capitals, and I’m following that convention here for the variable names.

Multiplying A with B is allowed because A.columns == B.rows. On the other hand, the following is not a valid operation:

let D = multiply(B, A)

The number of columns in matrix B doesn’t match up with the number of rows in matrix A. Here, we have B.columns != A.rows. Mathematically, doing B times A makes no sense.

Currently, the only way to catch these sorts of mistakes is by tripping an assertion during runtime:

func multiply(m1: Matrix, _ m2: Matrix) -> Matrix {

// do the matrices have the correct sizes?

precondition(m1.columns == m2.rows)

// bunch of math...

}

Certainly doable, but I don’t like it. The whole point of Swift’s static typing is that the compiler can catch as many programming errors during compile time as possible. It would be nice if we could make the compiler catch this kind of error too.

It turns out we can! In this article I’ll explore how to use Swift’s type system to make such mistakes impossible.

Not a great solution

The naive way to approach this problem is to create different structs for matrices of different sizes:

let A = Matrix_4x3()

let B = Matrix_3x2()

But then you also need a multiply() method that takes these specific types as parameters:

func multiply(m1: Matrix_4x3, _ m2: Matrix_3x2) -> Matrix_4x2

That seems a bit silly and leads to a lot of duplicate code.

What’s worse, you may not actually know the sizes of your matrices at compile time. In a machine learning problem you often need to load a dataset from a file but you won’t know in advance how many rows it has.

So this isn’t a workable solution. However, the idea of declaring different types for matrices of different sizes is promising…

Generics to the rescue

We want to incorporate the dimensions of the matrix into the type of Matrix somehow, without requiring that any of the matrix code, such as multiply(), knows anything about specific sizes.

Let’s define Matrix as follows:

struct Matrix<R,C> {

let rows: Int

let columns: Int

...

}

It now has two generic parameters, R and C, where R stands for the number of rows and C for the number of columns.

We can then define multiply() like this:

func multiply<U,V,W>(m1: Matrix<U,V>, _ m2: Matrix<V,W>) -> Matrix<U,W> {

// bunch of math...

return Matrix(rows: m1.rows, columns: m2.columns)

}

Notice how this captures the rule for matrix multiplication: a matrix of size U × V times a matrix of size V × W gives a new matrix of size U × W.

Here’s an example of how to use this new Matrix:

struct NumExamples {}

struct NumFeatures {}

struct OneDimensional {}

let A = Matrix<NumExamples, NumFeatures>(rows: 20, columns: 10)

let B = Matrix<NumFeatures, OneDimensional>(rows: 10, columns: 1)

We’ve created three new types — named NumExamples, NumFeatures, and OneDimensional — to represent the possible dimensions of our matrices. Note how I’ve given these types descriptive names, so it’s easier to tell their purpose.

The names NumExamples and NumFeatures come from machine learning, since that’s what I’m going to use these matrices for. NumExamples is the number of objects in your dataset and NumFeatures is the number of attributes that each example has. (Of course, if you were going to use the matrices for something else, you’d use different names.)

OneDimensional tells you that the matrix B only has one column. In linear algebra, we’d call that a column vector instead of a matrix. To make that distinction clearer in our code, it would be cool if we could write something like this:

typealias ColumnVector<Rows> = Matrix<Rows, OneDimensional>

typealias RowVector<Columns> = Matrix<OneDimensional, Columns>

Those typealiases would make ColumnVector and RowVector special cases of Matrix. But unfortunately this syntax is not supported in Swift 2.2. It might be coming in Swift 3.0.

Anyway, back to the example. When you now write,

let C = multiply(A, B)

it gives a new 20×1 matrix, as expected. Unlike before, however, an invalid attempt at multiplication gives a compiler error:

let D = multiply(B, A)

// error: cannot convert value of type 'Matrix<NumFeatures, OneDimensional>'

// to expected argument type 'Matrix<_, _>'

The error message is a bit vague, but what’s great is that we’ve used Swift’s type system to catch this kind of error. Instead of crashing the app at runtime, it is now impossible to multiply two matrices that do not have the correct dimensions.

Or is it? Well, you can still lie to the compiler:

let A = Matrix<NumExamples, NumFeatures>(rows: 20, columns: 10)

let B = Matrix<NumFeatures, OneDimensional>(rows: 500, columns: 1)

By changing B’s number of rows to 500, we still end up in the same situation as before. Now multiply(A, B) is no longer valid.

Just having these extra types is not enough… We need to make sure that the type NumFeatures somehow always refers to the same number, regardless of where it is used.

Protocols to the rescue

We could do something like this:

struct NumExamples { let size = 20 }

struct NumFeatures { let size = 10 }

But this fixes the sizes of these dimensions at compile time. Remember, we want to be able to set the matrix sizes at runtime, for example by reading a dataset from a file — and we may not know beforehand how much data is in that file. Hardcoding the matrix sizes is a no-go.

Instead, let’s define a new protocol:

protocol Dimension {

static var size: Int { get set }

}

Then Matrix becomes:

struct Matrix<R: Dimension, C: Dimension> {

let rows: Int

let columns: Int

init() {

self.rows = R.size

self.columns = C.size

}

}

Notice there no longer is an init(rows:columns:) function. The size of the matrix comes directly from the types R and C.

The final step is to make our dimension types conform to the new protocol:

struct NumExamples: Dimension { static var size = 20 }

struct NumFeatures: Dimension { static var size = 10 }

struct OneDimensional: Dimension { static var size = 1 }

Now we can write multiply() as follows:

func multiply<U: Dimension, V: Dimension, W: Dimension>

(m1: Matrix<U,V>, _ m2: Matrix<V,W>) -> Matrix<U,W> {

// bunch of math...

return Matrix<U,W>()

}

It is now impossible that matrices m1 and m2 don’t match up. The compiler simply won’t accept it.

let A = Matrix<NumExamples, NumFeatures>()

let B = Matrix<NumFeatures, OneDimensional>()

let C = multiply(A, B) // yay!

let D = multiply(B, A) // compiler error

There is no longer a way to make inadvertent mistakes. Of course, you can still cheat the system by doing something like this:

let A = Matrix<NumExamples, NumFeatures>()

NumFeatures.size = 500

let B = Matrix<NumFeatures, OneDimensional>()

Even Swift’s type system can’t stop you if you’re intent on being evil! (It’s probably smart to keep that precondition() inside multiply().)

By the way, you actually need that ability to change NumFeatures.size. But you should use it carefully. Just as we don’t know what this particular size will be until we run the program, there’s no reason why it should remain the same the whole time. For example, you might need to process multiple datasets of different sizes using the same routines.

Of course, you can do more with matrices than just multiply them. Here’s another example of where these dimension types come in useful:

func processData<M: Dimension, N: Dimension>

(X: Matrix<M, N>, _ y: Matrix<M, OneDimensional>)

-> Matrix<OneDimensional, N> {

// do impressive stuff...

}

let X = Matrix<NumExamples, NumFeatures>()

let y = Matrix<NumExamples, OneDimensional>()

processData(X, y)

This function takes a matrix X and a column vector y and does some work on them; for example, it might train a learning system. The constraint here is that X and y must have the same number of rows. Thanks to our dimension types, the compiler can enforce that constraint.

Conclusion

We used types to better express to the compiler what our program is doing. And that helps the compiler catch mistakes.

First published on Friday, 25 March 2016.

If you liked this post, say hi on Twitter @mhollemans or LinkedIn.

Find the source code on my GitHub.

New e-book: Code Your Own Synth Plug-Ins With C++ and JUCE

New e-book: Code Your Own Synth Plug-Ins With C++ and JUCE

Interested in how computers make sound? Learn the fundamentals of audio programming by building a fully-featured software synthesizer plug-in, with every step explained in detail. Not too much math, lots of in-depth information! Get the book at Leanpub.com